numpy/枕中非线性函数的数值梯度

我试图在numpy中实现一个数值梯度计算,作为cyipopt中梯度的回调函数。我对numpy梯度函数的理解是,它应该返回在一个基于有限差分逼近的点上计算的梯度。

我不明白如何用这个模块实现非线性函数的梯度。给出的样本问题似乎是一个线性函数。

>>> f = np.array([1, 2, 4, 7, 11, 16], dtype=np.float)

>>> np.gradient(f)

array([ 1. , 1.5, 2.5, 3.5, 4.5, 5. ])

>>> np.gradient(f, 2)

array([ 0.5 , 0.75, 1.25, 1.75, 2.25, 2.5 ])我的代码片段如下:

import numpy as np

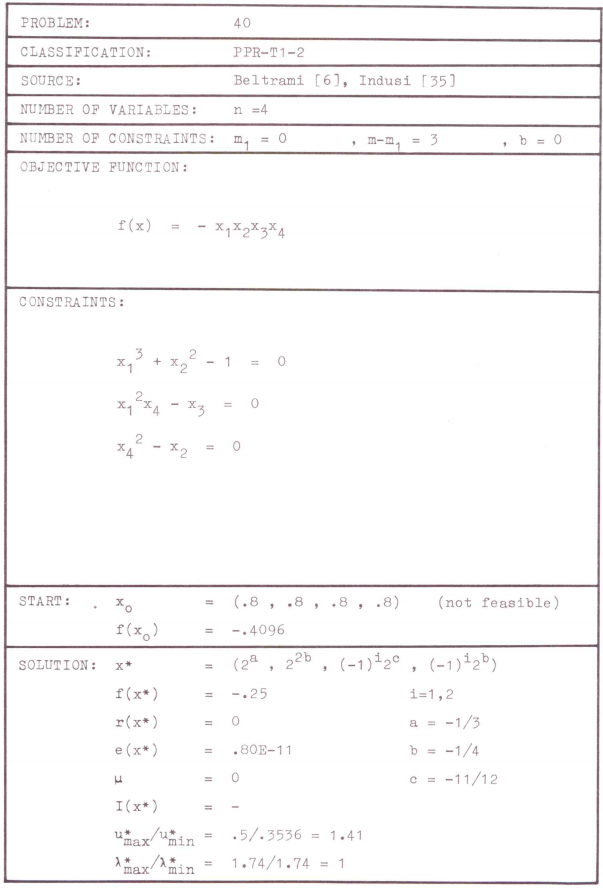

# Hock & Schittkowski test problem #40

x = np.mgrid[0.75:0.85:0.01, 0.75:0.8:0.01, 0.75:0.8:0.01, 0.75:0.8:0.01]

# target is evaluation at x = [0.8, 0.8, 0.8, 0.8]

f = -x[0] * x[1] * x[2] * x[3]

g = np.gradient(f)

print g它的另一个缺点是,我必须在几个点上计算x(并且在几个点返回梯度),在numpy/scipy中,是否有一个更好的选择,可以在单个点上对梯度进行数值计算,这样我就可以将其实现为回调函数了吗?

回答 2

Stack Overflow用户

发布于 2017-10-09 13:41:42

首先,有一些警告:

还有一些:

还有一些替代办法:

- 带解器的scipy.minimize或COBYLA,它可以为您做任何事情(SLSQP可以使用等式和不等式约束;COBYLA只使用不等式约束,其中模仿

x >= y+x <= y的等式约束是可行的)

用工具来完成你的任务

完整的示例问题在非线性规划码的测试实例中定义。

下面是一些基于数值微分的代码,用于解决您的测试问题,包括正式设置(函数、梯度、起点、边界、.)

import numpy as np

import scipy.sparse as sps

import ipopt

from scipy.optimize import approx_fprime

class Problem40(object):

""" # Hock & Schittkowski test problem #40

Basic structure follows:

- cyipopt example from https://pythonhosted.org/ipopt/tutorial.html#defining-the-problem

- which follows ipopt's docs from: https://www.coin-or.org/Ipopt/documentation/node22.html

Changes:

- numerical-diff using scipy for function & constraints

- removal of hessian-calculation

- we will use limited-memory approximation

- ipopt docs: https://www.coin-or.org/Ipopt/documentation/node31.html

- (because i'm too lazy to reason about the math; lagrange and co.)

"""

def __init__(self):

self.num_diff_eps = 1e-8 # maybe tuning needed!

def objective(self, x):

# callback for objective

return -np.prod(x) # -x1 x2 x3 x4

def constraint_0(self, x):

return np.array([x[0]**3 + x[1]**2 -1])

def constraint_1(self, x):

return np.array([x[0]**2 * x[3] - x[2]])

def constraint_2(self, x):

return np.array([x[3]**2 - x[1]])

def constraints(self, x):

# callback for constraints

return np.concatenate([self.constraint_0(x),

self.constraint_1(x),

self.constraint_2(x)])

def gradient(self, x):

# callback for gradient

return approx_fprime(x, self.objective, self.num_diff_eps)

def jacobian(self, x):

# callback for jacobian

return np.concatenate([

approx_fprime(x, self.constraint_0, self.num_diff_eps),

approx_fprime(x, self.constraint_1, self.num_diff_eps),

approx_fprime(x, self.constraint_2, self.num_diff_eps)])

def hessian(self, x, lagrange, obj_factor):

return False # we will use quasi-newton approaches to use hessian-info

# progress callback

def intermediate(

self,

alg_mod,

iter_count,

obj_value,

inf_pr,

inf_du,

mu,

d_norm,

regularization_size,

alpha_du,

alpha_pr,

ls_trials

):

print("Objective value at iteration #%d is - %g" % (iter_count, obj_value))

# Remaining problem definition; still following official source:

# http://www.ai7.uni-bayreuth.de/test_problem_coll.pdf

# start-point -> infeasible

x0 = [0.8, 0.8, 0.8, 0.8]

# variable-bounds -> empty => np.inf-approach deviates from cyipopt docs!

lb = [-np.inf, -np.inf, -np.inf, -np.inf]

ub = [np.inf, np.inf, np.inf, np.inf]

# constraint bounds -> c == 0 needed -> both bounds = 0

cl = [0, 0, 0]

cu = [0, 0, 0]

nlp = ipopt.problem(

n=len(x0),

m=len(cl),

problem_obj=Problem40(),

lb=lb,

ub=ub,

cl=cl,

cu=cu

)

# IMPORTANT: need to use limited-memory / lbfgs here as we didn't give a valid hessian-callback

nlp.addOption(b'hessian_approximation', b'limited-memory')

x, info = nlp.solve(x0)

print(x)

print(info)

# CORRECT RESULT & SUCCESSFUL STATE输出:

******************************************************************************

This program contains Ipopt, a library for large-scale nonlinear optimization.

Ipopt is released as open source code under the Eclipse Public License (EPL).

For more information visit http://projects.coin-or.org/Ipopt

******************************************************************************

This is Ipopt version 3.12.8, running with linear solver mumps.

NOTE: Other linear solvers might be more efficient (see Ipopt documentation).

Number of nonzeros in equality constraint Jacobian...: 12

Number of nonzeros in inequality constraint Jacobian.: 0

Number of nonzeros in Lagrangian Hessian.............: 0

Total number of variables............................: 4

variables with only lower bounds: 0

variables with lower and upper bounds: 0

variables with only upper bounds: 0

Total number of equality constraints.................: 3

Total number of inequality constraints...............: 0

inequality constraints with only lower bounds: 0

inequality constraints with lower and upper bounds: 0

inequality constraints with only upper bounds: 0

Objective value at iteration #0 is - -0.4096

iter objective inf_pr inf_du lg(mu) ||d|| lg(rg) alpha_du alpha_pr ls

0 -4.0960000e-01 2.88e-01 2.53e-02 0.0 0.00e+00 - 0.00e+00 0.00e+00 0

Objective value at iteration #1 is - -0.255391

1 -2.5539060e-01 1.28e-02 2.98e-01 -11.0 2.51e-01 - 1.00e+00 1.00e+00h 1

Objective value at iteration #2 is - -0.249299

2 -2.4929898e-01 8.29e-05 3.73e-01 -11.0 7.77e-03 - 1.00e+00 1.00e+00h 1

Objective value at iteration #3 is - -0.25077

3 -2.5076955e-01 1.32e-03 3.28e-01 -11.0 2.46e-02 - 1.00e+00 1.00e+00h 1

Objective value at iteration #4 is - -0.250025

4 -2.5002535e-01 4.06e-05 1.93e-02 -11.0 4.65e-03 - 1.00e+00 1.00e+00h 1

Objective value at iteration #5 is - -0.25

5 -2.5000038e-01 6.57e-07 1.70e-04 -11.0 5.46e-04 - 1.00e+00 1.00e+00h 1

Objective value at iteration #6 is - -0.25

6 -2.5000001e-01 2.18e-08 2.20e-06 -11.0 9.69e-05 - 1.00e+00 1.00e+00h 1

Objective value at iteration #7 is - -0.25

7 -2.5000000e-01 3.73e-12 4.42e-10 -11.0 1.27e-06 - 1.00e+00 1.00e+00h 1

Number of Iterations....: 7

(scaled) (unscaled)

Objective...............: -2.5000000000225586e-01 -2.5000000000225586e-01

Dual infeasibility......: 4.4218750883118219e-10 4.4218750883118219e-10

Constraint violation....: 3.7250202922223252e-12 3.7250202922223252e-12

Complementarity.........: 0.0000000000000000e+00 0.0000000000000000e+00

Overall NLP error.......: 4.4218750883118219e-10 4.4218750883118219e-10

Number of objective function evaluations = 8

Number of objective gradient evaluations = 8

Number of equality constraint evaluations = 8

Number of inequality constraint evaluations = 0

Number of equality constraint Jacobian evaluations = 8

Number of inequality constraint Jacobian evaluations = 0

Number of Lagrangian Hessian evaluations = 0

Total CPU secs in IPOPT (w/o function evaluations) = 0.016

Total CPU secs in NLP function evaluations = 0.000

EXIT: Optimal Solution Found.

[ 0.79370053 0.70710678 0.52973155 0.84089641]

{'x': array([ 0.79370053, 0.70710678, 0.52973155, 0.84089641]), 'g': array([ 3.72502029e-12, -3.93685085e-13, 5.86974913e-13]), 'obj_val': -0.25000000000225586, 'mult_g': array([ 0.49999999, -0.47193715, 0.35355339]), 'mult_x_L': array([ 0., 0., 0., 0.]), 'mult_x_U': array([ 0., 0., 0., 0.]), 'status': 0, 'status_msg': b'Algorithm terminated successfully at a locally optimal point, satisfying the convergence tolerances (can be specified by options).'}关于代码的备注

- 我们使用fprime,它基本上是为scipy.optimize中所有基于梯度的优化器添加的。

- 如消息来源所述,我不关心伊波普对麻雀的需求,我们使用了ipopts 哈森近似

- 基本思想在wiki: LBFGS上进行了描述

- 我确实忽略了ipopts对约束的Jacobian稀疏结构的需要。

- 默认假设:默认的恒心结构是一个较低的三角矩阵,我不会保证这里会发生什么(糟糕的性能与破坏一切)

Stack Overflow用户

发布于 2017-10-09 13:28:55

我认为你对什么是数学函数以及它的数值实现有某种误解。

您应该将您的功能定义为:

def func(x1, x2, x3, x4):

return -x1*x2*x3*x4现在,您希望在特定的点评估您的函数,可以使用您提供的np.mgrid进行评估。

如果要计算梯度,请使用copy.misc.derivative(https://docs.scipy.org/doc/scipy/reference/generated/scipy.misc.derivative.html) (注意dx通常不好的默认参数,将其更改为1e-5 )。数值计算中,线性梯度与非线性梯度没有差别,只是对于非线性函数,梯度在任何情况下都不一样。

您使用np.gradient实际上是从数组中的点计算梯度,函数的定义被f的定义所隐藏,因此不允许在不同的点上进行多个梯度计算。此外,使用您的方法使您依赖您的谨慎步骤。

https://stackoverflow.com/questions/46641453

复制相似问题